Cactus Compute

Hybrid AI toolkit deploying speech, vision, and text models efficiently.

About Cactus Compute

Cactus: On-device AI for Smartphones, Laptops & Edge

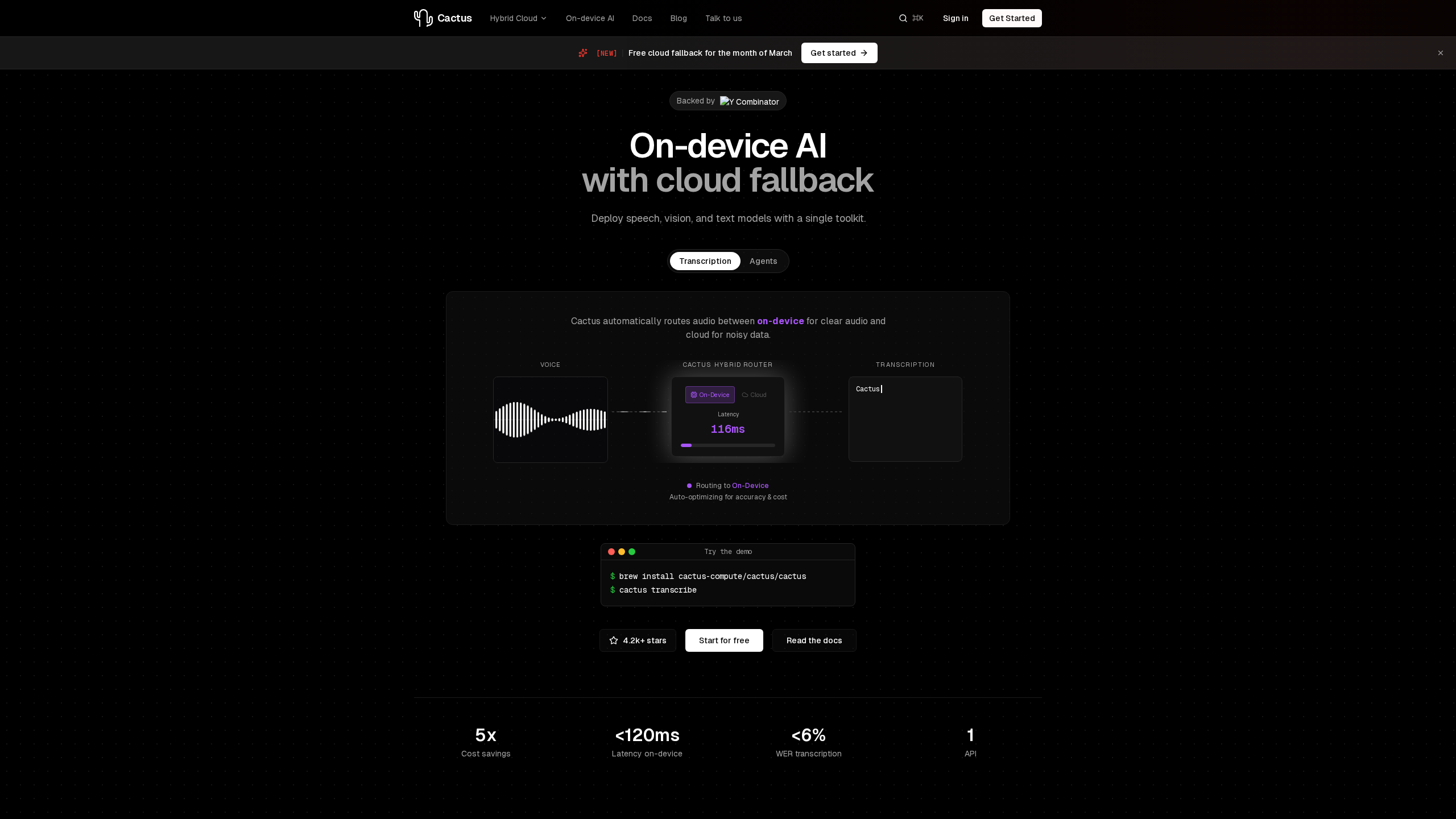

Cactus is a hybrid cloud and on-device AI toolkit that allows developers to deploy speech, vision, and text models. Powered by the Cactus Engine, it automatically routes tasks between on-device processing for simple operations and cloud processing for complex or noisy data, optimizing for both accuracy and cost.

Key Features

- Hybrid Routing: Automatically routes audio and commands between on-device and cloud based on complexity and audio quality, handling over 80% of production transcription and LLM inference on-device.

- Optimized Execution & Memory: Features quantized models with hardware-specific acceleration for battery-efficient inference, alongside zero-copy memory mapping for minimal RAM usage and near-instant model loading.

- Cross-Platform SDK: Write once and deploy across iOS, Android, macOS, and wearables.

- High Performance: Delivers under 120ms on-device latency and under 6% Word Error Rate (WER) for transcription.

- Privacy Controls: Offers the ability to lock transcription to on-device only, ensuring audio data never leaves the device. It is HIPAA-friendly, GDPR-compliant, and features zero data retention.

- Open Source: The Cactus Engine is fully auditable and community-driven, allowing developers to inspect the code running on user devices.

Use Cases

- Mobile Voice Assistants: Real-time voice commands and dictation for iOS and Android applications.

- Desktop Notetakers: Meeting transcription and note-taking for macOS, featuring automatic speaker detection.

- Wearable Intelligence: Always-on transcription for smart glasses and AR devices with minimal battery impact.

Pricing

Cactus is free to start and scales with usage. It also offers a free cloud fallback promotion for the month of March.

Getting Started

Website: https://www.cactuscompute.com

Cactus provides a unified API for hybrid inference, delivering the privacy and low latency of on-device AI with the accuracy of cloud computing for modern applications.